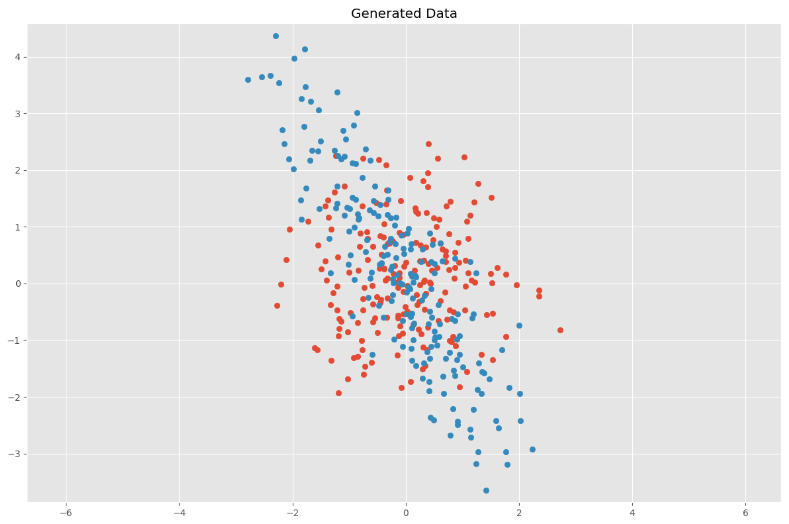

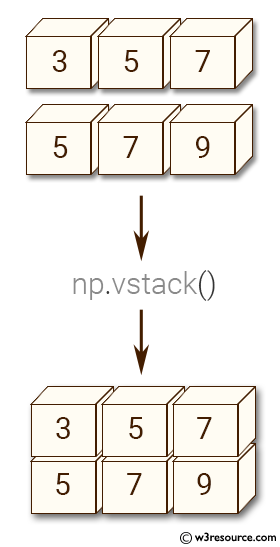

#import librariesįrom sklearn.model_selection import train_test_splitįrom sklearn.linear_model import LogisticRegressionįrom sklearn.ensemble import RandomForestClassifierįrom trics import r2_score, mean_squared_error, confusion_matrix, precision_score, recall_score, accuracy_score, f1_scoreįrom sklearn.preprocessing import LabelEncoderįrom sklearn.preprocessing import MinMaxScaler Using cloud services like Google Cloud Auto ML definitely saves tons of time and avoid having to navigate the different image processing and extraction options. There is no one size fits all and the feature engineering should consider various feature extraction techniques and converting to feature vectors and will depend on your target use cases. The models can be refined and improved by providing more samples (full dataset is around 225MB), more features and combining both global and local features for increasing your model performance. I downloaded some images from the web and tried to predict and the model got most of it right with global features trained model, but pretty poor with the local features. Tried three ML algorithms: LogisticRegressor (LR), RandomForestClassifier (RFC) and Support Vector Machine(SVM), RFC performed best (close to 50% for raw pixels and 60% accuracy / precision for global features) but for local points of interest with ORB and BOVW, SVM had better performance. The focus was to extract the features and train the model and see how it performs with minimal tuning. Local features with ORB and Bag of Visual Words (BOVW using KMeans) translating keypoints and feature descriptors into feature vectors.Global feature extraction with HuMoments (shape), Haralick(texture) and histogram(color) and combine them to create global feature vectors.Overall, tried 3 scenarios for feature extraction and classification, Build and Test Model: split the data for training and testing, train the model with training data and then validate the model using test data and evaluation metrics and making predictions on new images.This is the most important phase of the process. preprocessing includes image resizing for consistency in size, feature engineering and feature extraction, converting into feature vectors.loading data: organize and load the images dataset.Summarizing the steps to go through building your model. The images were stored under the respective directories with the corresponding label names as above. if you want to learn more about the different feature extraction techniques, visit the openCV page hereįor the purpose of this exercise, i used the flowers dataset from Kaggle and used a subset of the total images (total samples to 516) due to limited processing resources and evenly distributed across 5 flower classes - which will serve as our labels. Local features (quantify regions in and around keypoints of interest and their descriptors) are extracted using multiple algorithms, most popular of them are SURF, ORB, SIFT, BRIEF. Usually these features can then be combined to create the global feature vectors that will be fed into the classifiers. Global features focus on shape, color, texture across the full image and extract features related to that. You can look at features from an image in 2 buckets: global features and local features (key regions and points of interest). So we use "object based" detection and feature extraction techniques to get the various features and transform them to feature vectors to be fed into our ML algorithms. Image classification can be approached in multiple ways - for developing basic classifier you can use "raw pixel" approach but is not good enough for complex features and classification tasks.

Eventually (my next adventures) i want get to using Keras and TensorFlow to leverage the more robust capabilities these libraries have to offer. I used OpenCV for the purpose of this exercise.

There are multiple libraries to leverage (opencv, scikit image, Python Image Library etc). My goal for this exercise was to demystify the process of image classification and build a reasonable model to predict images. Image recognition and classification is an interesting and complex topic and there are so many different approaches to get to the outcome you are looking for. Following the last effort around sentiment analysis, wanted to manually program my way to build an image classification model using openCV and scikit learn - to see how close i get to the out of box effort with Google Cloud AutoML.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed